昊源金屬制品廠 匠心打造高品質馬口鐵罐、鐵盒及茶葉皮革包裝盒

在現代包裝行業中,金屬與皮革以其獨特的質感、出色的保護性能和經典的美學價值,始終占據著重要地位。昊源金屬制品廠正是這一領域的佼佼者,專注于馬口鐵罐、各類鐵盒以及融合了傳統與現代工藝的茶葉皮革包裝盒的研發、設計與生產,為客戶提供兼具實用性與藝術感的包裝解決方案。

核心主營產品

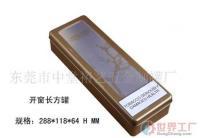

1. 馬口鐵罐

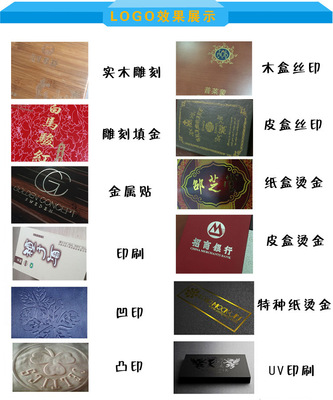

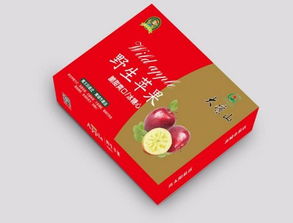

昊源金屬制品廠生產的馬口鐵罐,以其優良的密封性、防潮性和耐腐蝕性,成為食品、茶葉、糖果、化妝品等產品的理想包裝選擇。工廠采用優質鍍錫薄鋼板(馬口鐵)為原材料,通過精密沖壓、焊接、印刷及內涂工藝,確保罐體堅固美觀且安全衛生。無論是經典的圓柱罐、方罐,還是根據客戶需求定制的異形罐,都能實現批量生產,并支持多種表面處理工藝,如高清彩印、燙金、絲印等,助力品牌提升產品檔次與視覺吸引力。

2. 鐵盒

鐵盒產品線豐富多樣,廣泛應用于禮品包裝、節日商品、文具、首飾及高檔食品領域。昊源注重鐵盒的結構設計與細節工藝,從盒蓋的緊密度到鉸鏈的順滑度,都經過嚴格測試。鐵盒表面可進行精美的圖案印刷和特效處理,如浮雕、磨砂、亮光等,使其不僅是包裝容器,更是一件可收藏的藝術品,能有效延長產品的生命周期并增強用戶的開盒體驗。

3. 茶葉皮革包裝盒

針對高端茶葉市場,昊源創新性地將金屬的穩固與皮革的溫潤相結合,推出了獨具特色的茶葉皮革包裝盒。這類包裝通常以鐵盒或鐵罐作為內膽,確保茶葉的密封儲藏,外部則包裹精選的頭層皮革或環保仿皮革,輔以手工縫線、金屬扣飾或烙印LOGO等工藝。整體設計古樸典雅、觸感奢華,完美詮釋了茶文化的深厚底蘊,是商務饋贈與個人品鑒的絕佳選擇。

企業優勢與匠心承諾

昊源金屬制品廠堅持“質量為本,客戶至上”的經營理念,擁有先進的生產設備、專業的設計團隊和嚴格的質量管控體系。從原料采購到成品出廠,每一個環節都精益求精。工廠具備強大的定制化能力,可根據客戶提供的設計圖紙或概念,進行一對一打樣與開發,滿足市場對個性化、差異化包裝日益增長的需求。

昊源也積極響應綠色環保號召,在生產和材料選擇上注重可持續性,部分鐵盒與馬口鐵罐可實現循環利用,體現了企業的社會責任感。

應用與展望

昊源的產品不僅服務于國內眾多知名品牌,也遠銷海外市場。無論是需要防銹耐用的工業零件包裝,還是追求精美時尚的消費品禮盒,昊源都能提供可靠的解決方案。在昊源金屬制品廠將繼續深耕金屬與皮革包裝領域,融合新技術、新設計,致力于成為客戶最值得信賴的包裝合作伙伴,用匠心工藝為產品賦予更高的價值,守護每一份美好。

如若轉載,請注明出處:http://www.cphk.com.cn/product/54.html

更新時間:2026-04-14 19:25:56